Platform · Vision

Run full DMS + ADAS on 10 TOPS — without taking CPU from your app.

Five camera inputs converge on a shared-memory pipeline. Camera, GPU, and NPU exchange frames directly — the CPU never copies pixel data. Run five concurrent models plus H.265 encode on a 10-TOPS-class device and keep CPU headroom free for your application logic.

Key claims

The numbers and design invariants.

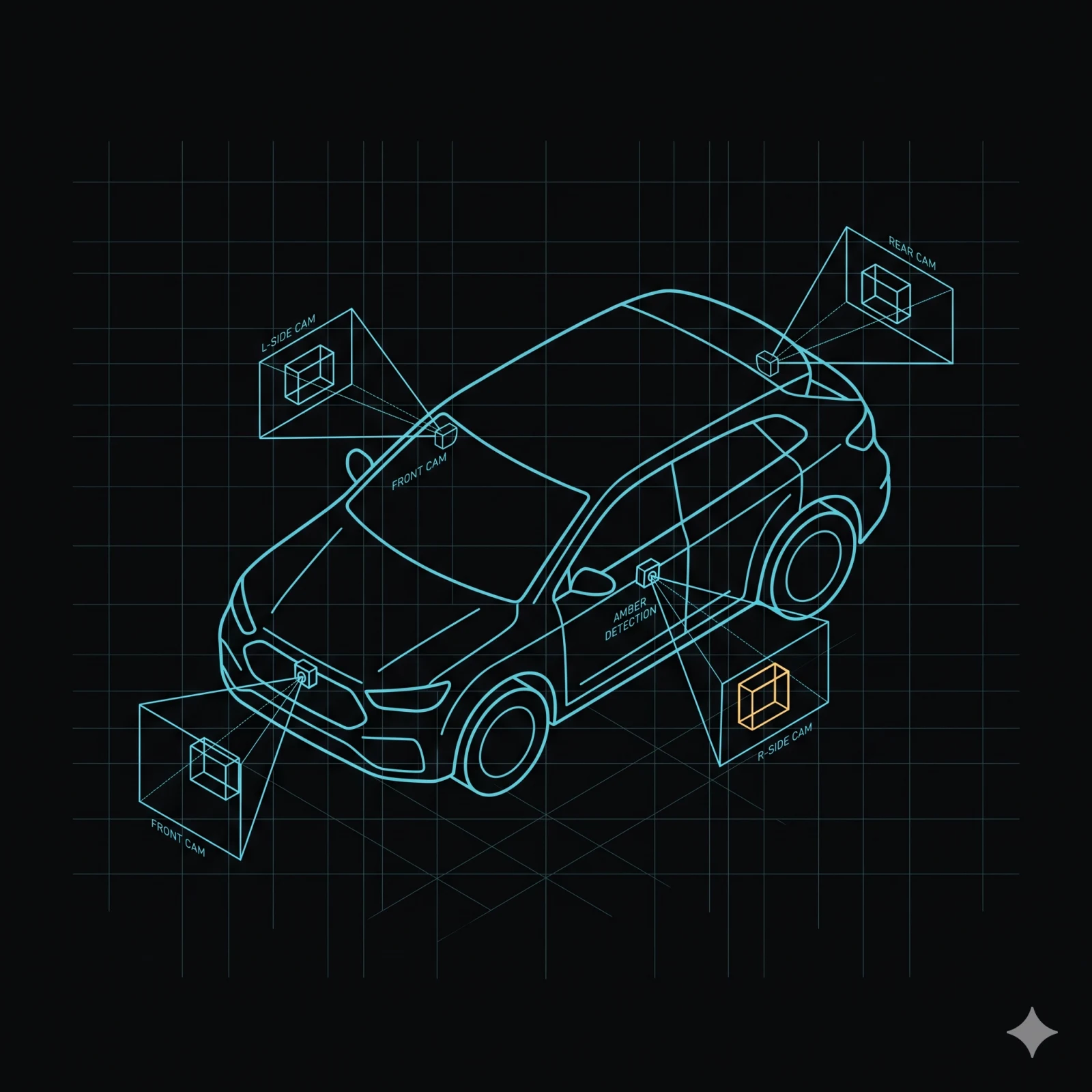

Camera capture

Five camera inputs. One unified API.

Five camera inputs converge on the same shared-memory frame format. Integrators do not write per-platform pipelines.

Architecture

| Input | Silicon backend | Notes |

|---|---|---|

| MIPI-CSI | iMX8M Plus (direct) | Native MIPI interface; no external bridge |

| GMSL2 | QCS family (QMMF) | Qualcomm-only path; iMX8M Plus uses MIPI-CSI direct |

| USB UVC | Both | Standard V4L2 UVC class driver |

| Ethernet RTSP/ONVIF | Both | Network cameras; live RTSP streaming available |

| WebRTC | Both | Browser-compatible live streaming path |

Every backend normalises output to NV12 in shared GPU memory (dmabuf) before the tee (ADR-13). Five inputs, one entry contract.

Camera-to-consumers data flow. The sensor — MIPI-CSI, GMSL2, USB UVC, RTSP/ONVIF, or WebRTC — produces frames into shared GPU memory. The tee then fans the frame out to three consumers without copying: the GPU ROI shader for crop and resize, the dashcam encoder, and the visual + inertial pose tracker. The ROI shader feeds tensor handles to the AI runtime for NPU inference.

flowchart TD S[Camera<br/>MIPI-CSI · GMSL2 · UVC · RTSP/ONVIF · WebRTC] S --> G[Shared GPU memory<br/>NV12 frame] G --> T[Tee · entry contract] T --> R[GPU ROI shader<br/>crop and resize] T --> D[Dashcam encoder] T --> V[Pose tracker] R --> A[AI runtime<br/>NPU inference] class A ai-node class R ai-node

Frame transport

One frame, many consumers — each at its own rate.

One frame produced; many consumers subscribe at independent cadences. Slow consumers never stall the producer; dropped frames are tracked per topic.

60 Hz

Video

Full-rate frames to the dashcam encoder or any subscriber.

30 Hz

GPU crop and resize

ROI extraction at the NPU inference rate.

10 Hz

Pose tracking

Visual + inertial pose tracker subscribes on its own cadence — each subscriber independent.

Two-stage detection

Scan the whole frame fast, then re-look at parts that matter.

Triage detects on the full frame; refined models then re-look only at the regions of interest. The metadata loop between the AI runtime and the GPU crop carries bounding boxes and a model ID — tens of bytes per detection, no pixel data crosses the bus between stages.

Two-stage detection. The camera publishes the full frame to the AI runtime, which runs triage inference and produces detection bounding boxes with class labels and confidence scores. The runtime then sends bounding-box metadata and a model ID — tens of bytes, no pixel data — to the GPU ROI shader. The shader crops, resizes, and normalises each region and returns a tensor handle per ROI. The runtime then runs the refined inference per ROI.

sequenceDiagram participant Cam as Camera participant Ai as AI runtime participant Roi as GPU ROI shader Cam->>Ai: shared frame — full image Note over Ai: triage inference<br/>detect bbox + class + confidence Ai->>Roi: SelectRois(bbox[], model_id) — tens of bytes Note over Roi: GPU crop + resize + normalize per ROI Roi-->>Ai: tensor handle per ROI (1:N) Note over Ai: refined inference per ROI

GPU crop and resize

Same code on both silicon families.

The GPU ROI shader runs crop, resize, and normalise entirely on the GPU. The metadata loop carries bounding box and model ID only — no pixels.

Architecture · per silicon family

iMX8M Plus

GPU ROI portable. NPU inference via the silicon-vendor delegate. Direct GPU-to-NPU shared memory is not available on this platform; the GPU output tensor is handed to the NPU as a standard tensor.

Qualcomm QCS family

GPU ROI portable, plus GPU-to-NPU shared memory (rpcmem/ION) for tensor reuse without re-import. Binary-compatible across the AI-class tier — up to ~100 TOPS.

NPU inference

AI runtime: load models by path or bytes.

Load multiple models, run them concurrently, deploy ONNX or TFLite directly. Same-model re-entry is prevented by construction.

API surface

| Method | Description |

|---|---|

| LoadModel | Load a .tflite model by path or bytes |

| UnloadModel | Release a model and its NPU resources |

| RunInference | Accept dmabuf frame input (FramePayload::Dmabuf); returns detection tensors |

| ListModels | Enumerate loaded models with metadata |

Multiple models load and run concurrently; same-model re-entry is impossible by construction. Input is the shared GPU memory frame exclusively — no CPU pixel copy path exists in production code. ONNX models can be auto-converted before deployment.

Visual + inertial pose tracking

Visual + inertial + GNSS, fused on-device.

Pose tracking fuses camera, inertial, and GNSS. GNSS-absent indoors triggers a graceful soft-fail; map persistence survives reboots.

The ≤33 ms per-frame figure is from a synthetic 300-frame harness — not a validated real-target benchmark. Real-target timing is pending. Frame the feature accordingly.

Architecture

Visual front-end: corner detection plus oriented binary descriptors with RANSAC bootstrap. Inertial pre-integration with full covariance and GNSS fusion via factor-graph optimisation (sparse Cholesky). No external SLAM library dependency.

| Method | Description |

|---|---|

| StreamPose | 6-DOF pose with covariance (T_WB, PoseSource: VISUAL_ONLY or VISUAL_INERTIAL) |

| GetStatus | Current VSLAM health and tracking state |

| GetMapStats | Landmark count, keyframe count, loop-closure count |

| SaveMap | Atomic JSON write for reboot-persistent map |

| LoadMap | Restore a saved map to bootstrap tracking |

Map persistence survives reboots via atomic file write.

GDPR anonymisation

Live anonymisation, before any frame leaves the pipeline.

An optional pixelation stage anonymises frames before they reach the dashcam encoder or any downstream consumer. Enabled or disabled via boot-time policy toggle. Live hot-toggle without reboot is not supported today. Downstream consumers receive already-anonymised frames with no additional integration step.

Observability

14 built-in metrics across the pipeline.

GPU ROI shader — 6 metrics

Calls, ROIs extracted, duration histogram, and 3 others. Integrates with the MOS4 observability pipeline with no per-component instrumentation code.

AI runtime — 8 metrics

Counters, histograms, and a gauge — including an inference-heartbeat watchdog with a 5 s budget. Same zero-instrumentation integration.

FAQ

Frequently asked questions

-

Which camera inputs are supported?

Five: MIPI-CSI (direct on iMX8M Plus), GMSL2 (Qualcomm only), USB UVC, Ethernet RTSP/ONVIF, and WebRTC. Live RTSP and WebRTC streaming paths are both available.

-

Does the CPU ever touch pixel data?

No, by design. Camera, GPU, and NPU exchange the same shared-memory frame via handle passing. CPU pixel mapping is a spec violation in production builds.

-

Can I run multiple AI models concurrently with video encoding?

Yes. The pipeline is sized to run a full DMS + ADAS workload (5 models) plus H.265 encode on a 10-TOPS-class device with CPU headroom free for application logic.

-

How does GDPR anonymisation integrate?

An optional pixelation stage anonymises frames before they reach the dashcam encoder or any downstream consumer. Enabling or disabling requires a reboot. Downstream consumers receive already-anonymised frames with no integration step.

-

What does the pose tracking timing figure mean?

The ≤33 ms per-frame figure is from a synthetic 300-frame harness, not a validated real-target benchmark. Real-target benchmarking is in progress. The pipeline targets 30 FPS.

Architecture FAQ

Implementation details

-

How does ROI extraction work across silicon families?

The GPU ROI shader imports the shared GPU memory frame, runs letterbox + format conversion on the GPU, and outputs one tensor handle per ROI. The shader is portable across iMX8M Plus and Qualcomm targets. Qualcomm additionally supports direct GPU-to-NPU shared memory (rpcmem/ION) so the NPU reuses the tensor without re-import — that path is Qualcomm-specific.

-

What does the pose tracking pipeline look like internally?

Visual front-end runs corner detection (FAST-9), oriented binary descriptors (BRIEF), and RANSAC essential-matrix bootstrap. Inertial pre-integration uses Forster 2017 with 9×9 covariance. GNSS fusion via factor-graph optimisation with sparse Cholesky solves. No external SLAM library dependency.

Bring a camera and a use case.

A live demo on Munic hardware — your office, your vehicle, or your machine. Talk to engineering to match the SoC tier to your latency and compute budget.