Built for production

A documented SDLC, SBOM, and CRA mapping on every release.

CycloneDX SBOM, cargo audit / geiger / deny, semgrep, and a public PSIRT process. The EU Cyber Resilience Act applies from 2027 — MOS4 ships the compliance artefacts.

Vehicles · Machines · IoT — one OS

35 production-ready components. 129 features available. A 28.4 MB steady-state footprint that boots in 1.6 s on modem-class silicon.

Metrics tagged "modem-class reference profile" measured on a representative modem-class production device. Steady-state RSS at idle; first-app-ready boot is time from kernel handoff to first supervised micro service responding to a service call. Container budget is the MCM overhead envelope per the SDK reference profile.

Four conversations · One platform

Each pillar maps to a page the visitor can forward to the colleague who owns that decision. The CTO usually lands first; the others read what gets sent to them.

A documented SDLC, SBOM, and CRA mapping on every release.

CycloneDX SBOM, cargo audit / geiger / deny, semgrep, and a public PSIRT process. The EU Cyber Resilience Act applies from 2027 — MOS4 ships the compliance artefacts.

One OS across a modem-class to AI-class silicon family — Munic ports it.

Munic curates the silicon family and ports MOS4 across it; your team picks the tier per product. A practical option alongside RTOS, hobby Linux, Android Automotive, or full ROS2.

Declare the AI in TOML. Camera, GPU, and NPU share memory — no CPU pixel copies.

Run a full DMS + ADAS workload (5 models) plus H.265 encode on a 10-TOPS-class device. Vision adds multi-camera capture, GPU crop and resize, and GDPR live anonymisation before any frame leaves the pipeline.

Python, Rust, C, C++, and Go nodes on a single platform.

Config for product managers. No-code engines (MSP, MEP, Multi Stacks, AI Funnel) for embedded engineers. Full code where it matters. Three programming tiers, your call per feature.

Three programming tiers

MOS4 is structured around three programming surfaces. Pick the tier per product, per feature, per engineer — and mix them in the same fleet.

Toggle features, set thresholds, ship variants — no code review needed. Declarative TOML, validated at build time.

Read more →MSP signal graphs. MEP state-machine policies. Multi Stacks vehicle and IoT comms. AI Funnel declarative edge AI.

Read more →Use what your team already writes — inside the lightweight container engine with enforced resource isolation.

Out of the box, together

The four engines are not silos. A single Python container, talking to the in-process MQTT broker, can drive MSP, MEP, Multi Stacks, and AI Funnel from one process — no Rust toolchain, no per-engine SDK, no custom glue.

c.publish(

"mos/msp/LoadGraph",

json.dumps({"name": "harsh_brake", "yaml": yaml_str}),

)c.publish(

"mos/mep/LoadPolicy",

json.dumps({"name": "geofence", "yaml": policy_str}),

)c.publish(

"mos/multi-stacks/LoadStack",

json.dumps({"name": "j1939_truck", "json": stack_json}),

)c.subscribe("mos/ai-runtime/detections")

c.on_message = lambda _c, _u, m: handle(m.payload)Same MQTT client, four engines. Any MQTT-capable language — Python, Rust, C, C++, Go, JavaScript — drives the same surface. No language limit.

The pipeline

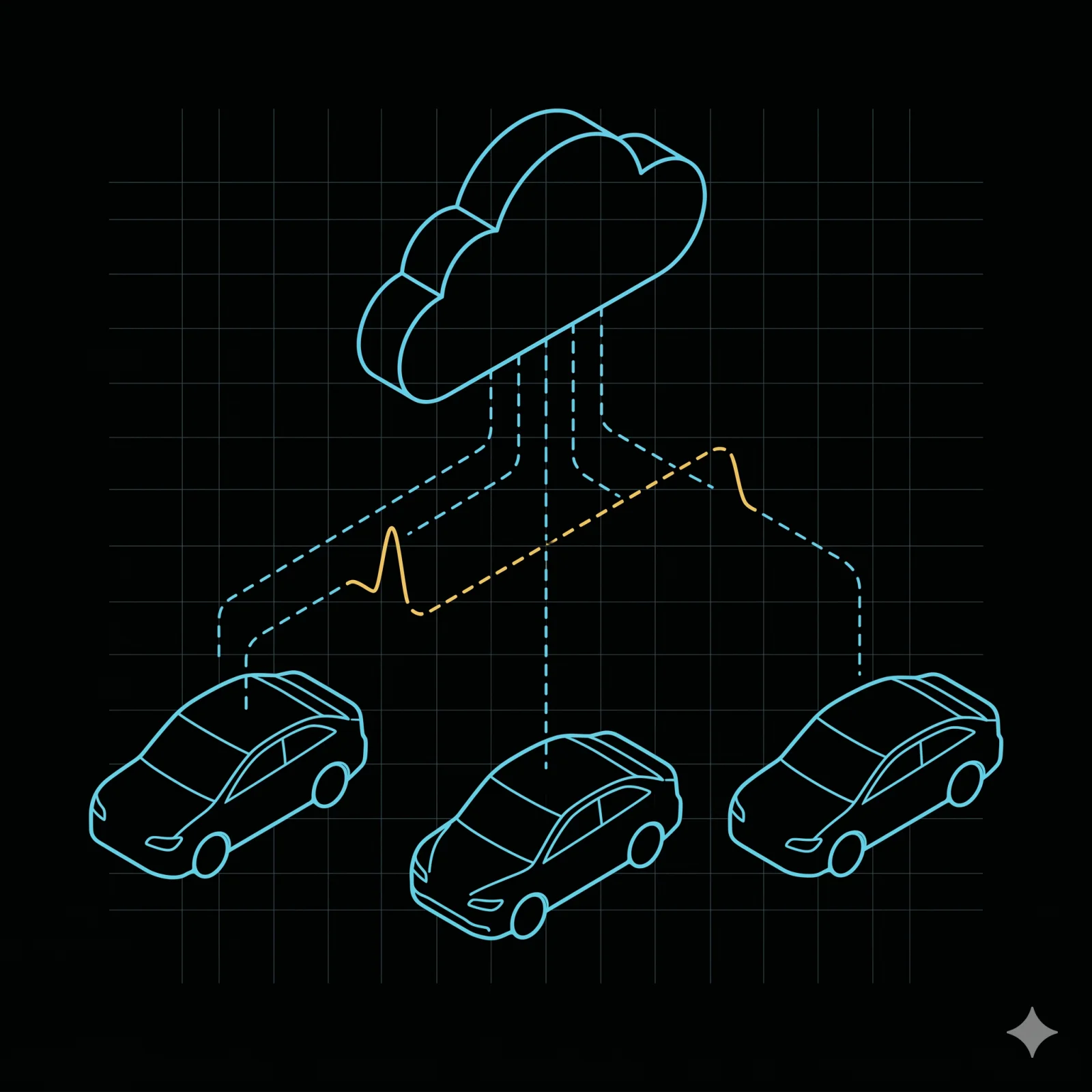

Every MOS4 product runs the same four-engine pipeline: bus and IoT decode, signal processing, state-machine policy, and declarative edge AI — then branches to the on-device runtime and the Munic cloud in the same OTA cycle.

Four-engine pipeline. Vehicle bus and industrial-IoT inputs (CAN, CAN-FD, J1939, Modbus) feed Multi Stacks; sensor inputs (camera, IMU, GNSS) feed MSP signal-processing graphs. Multi Stacks decoded signals also feed MSP. MSP outputs feed MEP, the state-machine policy engine (T·C·A primitives under the hood). MEP outputs feed AI Funnel, the declarative edge-AI engine. AI Funnel branches to two destinations in the same OTA cycle: an on-device NPU/GPU/CPU runtime, and the Munic cloud for retraining and OTA delivery.

flowchart LR Bus[CAN · CAN-FD · J1939 · Modbus] --> MS[Multi Stacks] Sensors[Camera · IMU · GNSS] --> MSP[MSP graphs] MS --> MSP MSP --> MEP[MEP — state-machine policy] MEP --> AI[AI Funnel] AI --> RT[On-device NPU / GPU / CPU runtime] AI --> Cloud[Munic cloud — OTA + retrain] class AI ai-node class RT ai-node

| metric | MOS 3.x | MOS4 (modem-class reference) |

|---|---|---|

| first-app-ready boot | ~90 s | 1.6 s |

| steady-state RSS | ~60 MB | 28.4 MB |

| minimal micro service — user-written Rust | n/a | < 30 lines |

AI Funnel

Customers ship a TOML graph plus an ONNX/TFLite model and a COCO dataset. Camera, GPU, and NPU share memory directly — the CPU never copies pixel data. Run multiple concurrent models with H.265 encode on a 10-TOPS-class device.

A TOML graph, ONNX/TFLite models, a COCO dataset, and an optional business-logic container.

Retrain, quantise, validate, benchmark, package, and OTA the unified triage model.

GPU crop and resize, NPU inference, shared memory end-to-end. No pixel bytes traverse the CPU.

35 production components · 129 features

Every supervised component is process-isolated, with explicit typed interfaces, its own CI pipeline, and per-component resource limits. GNSS, modem, OTA, power, vehicle-bus firmware — already there.

Cloud egress

The end-to-end cloud path: component in container → MQTT bridge → communication gateway → cloud-connect → cloud microservice → GraphQL mesh → customer application.

Cloud egress topology — component to GraphQL mesh

flowchart LR

A[Component in container] --> B[MQTT bridge]

B --> C[Communication gateway]

C --> D[cloud-connect]

D --> E[cloud microservice]

E --> F[GraphQL mesh]

F --> G[Customer app] Public GraphQL gateway reference: gateway.integration.munic.io/services/graphql_gateway/docs/

Container runtime

From end-of-compile to first successful service call on a real device: 10 seconds. Python, Rust, C, C++, and Go nodes run side-by-side under enforced resource limits, under 5% CPU/RAM overhead. Any MQTT client, any language — no language limit.

Resource limits per container as a contract, not best-effort — from day one.

Python, Rust, C, C++, Go — existing nodes drop in without rewrite. ROS2 nodes ride along via the sidecar pattern.

End-of-compile to first successful service call on a real device.

MCM container budget envelope — measured on the SDK reference profile.

SDLC · CI · compliance

63 .proto files in mos-interfaces cover 46 service interfaces. cargo audit, cargo deny, cargo cyclonedx → CycloneDX SBOM. semgrep static analysis. Every micro service, every commit.

Solutions

Pick the vertical closest to your product. Each page covers the capabilities most relevant to that buyer — and links to the components already in production for it.

EV makers, performance OEMs, specialty platforms.

Explore →Off-highway, ISOBUS, agriculture.

Explore →Telematics, OBD heavy duty, fleet.

Explore →Industrial automation, autonomous arms and mowers.

Explore →Dashcam, multi-camera, fleet-wide vision.

Explore →Article-by-article CRA mapping, SBOM, security pipeline, PSIRT process.

A 30-minute discovery call with engineering — no slide deck, no NDA, a direct conversation about fit.